Brain-Computer Interface Controlled Role-Playing Games

Introduction

Hawke Robinson is Executive Director and founder of the 501(c)3 non-profit RPG Research. Both our founder and the organization are huge advocates for accessibility and inclusiveness.

Our founder's background in nursing, recreation therapy, gaming, neuroscience, music, and more has inspired his decades-long endeavor to using non-invasive neuro-and-bio technologies for people with accessibility issues, as well as enhanced experiences for the general public.

We support accessibility not only through all of our training, advocacy, and accessible mobile facilities, but through our active projects to make gaming accessible to all. RPG Research is a 501(c)3 100% volunteer-run non-profit research charitable research and human services organization. More about RPG Research.

Main website: www.bcirpg.com - alias: https://www.rpgresearch.com/bci-rpg

Moved project name from erpg to bcirpg.

New open source Github source code repository is now: https://github.com/RPG-Research/bcirpg was The opensource github repository: https://github.com/RPG-Research/erpg

The project Wiki: https://github.com/RPG-Research/erpg/wiki

Join the Development and Testers Team!

Interested in working on this project as a developer, QA, or user tester?

Remember we are a 100% volunteer-run (unpaid) organization.

We are primarily using Godot as our game development tool.

We have openings for the following roles:

- User-testers (requires you possessing your own BCI headset unless you are in the Spokane, WA area, then you can use ours): 5 openings.

- QA1 Function Tester: 2 openings - Apply

- QA2 Code Reviewer: 2 openings - Apply

- QA3 (UAT) Tester: 1 opening - Apply

- Entry-level Godot developer: 2 openings remaining. - Apply

- Junior Godot developer: 3 openings remaining. - Apply

- Senior (General) Developer: 2 openings remaining. - Apply

- Branching Story Designer (Twinery) - 1 opening remaining

- Game 2d Graphics Designer - 1 opening

- Game 3d Graphics Designer - 1 opening

- Game UI/UX Developers - 1 opening

- Brain-Computer Interface Developer - 1 opening

- Team lead - filled.

- Project Manager - filled

- Architect - filled.

- CTO - filled.

See https://rpgresearch.com/jobs for more detailed descriptions.

For additional information, email info at rpgresearch dot com or post comments in the projects Github.

Weekly team meeting is Sunday 10:00 am to Noon Pacific Time (PST/PDT) via our online platform at RPGSN.net.

Background History

Our founder was first involved with role-playing games in 1977. He also began software development and engaging in the online community in 1979 (through the University of Utah) and various BBSes.

He has been involved with VR equipment since the late 1980s using Amigas (still has working Amiga 2000) and other equipment over the decades.

Developed Virtual-Reality Markup Language (VRML) websites in the mid-to-late-90s.

Experimenting with early AR in the late 90s and early 2000s with early PDAs and "smartphones" long before iOS and Android existed (Nokia, Palm phones, etc), combined with early location and GPS technologies.

Experimenting with biofeedback and bio-controlled devices since 1996 (a la mouse cursor controlled by single fingertip clip for example).

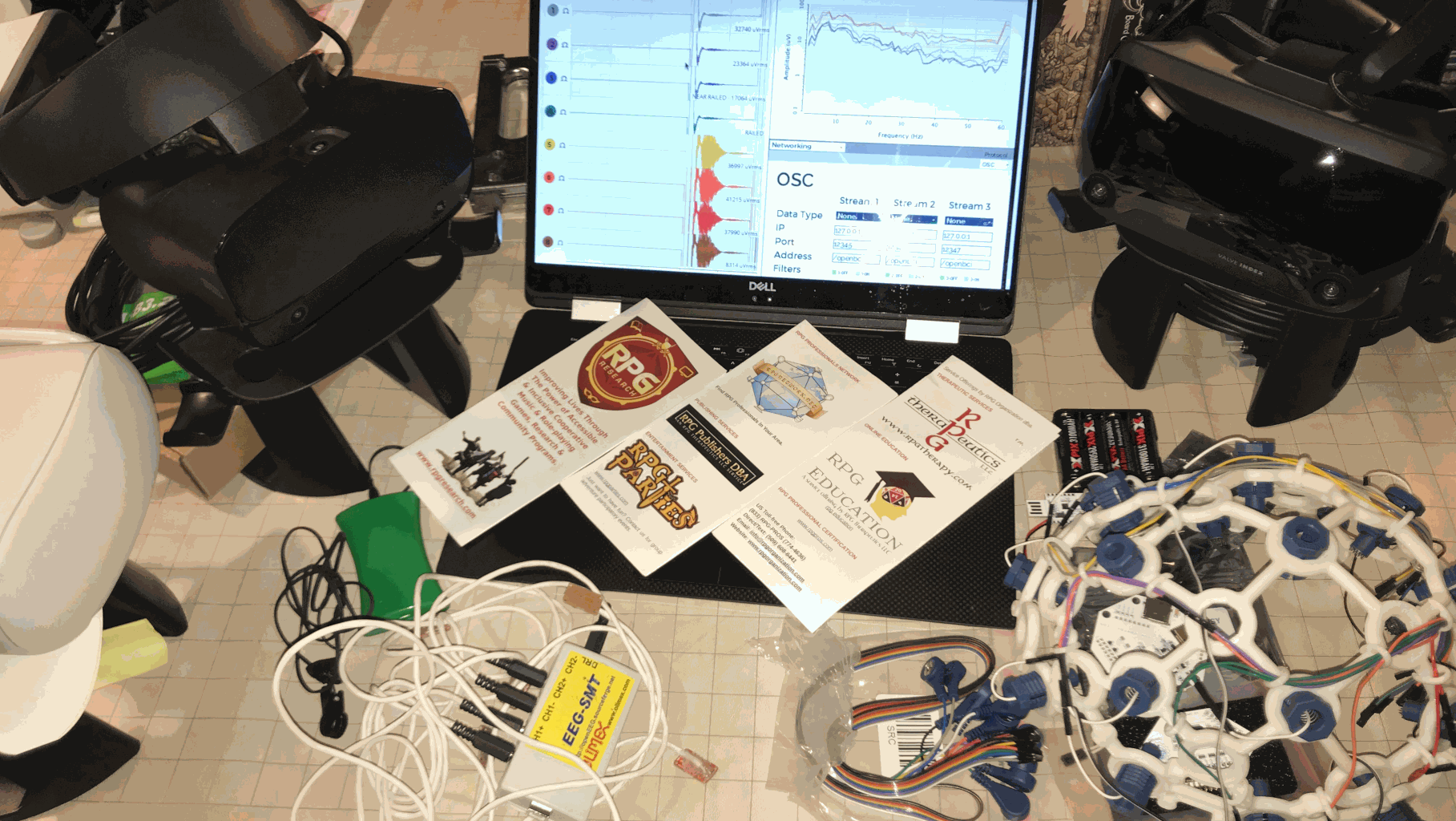

Since about 2005 working on integration of electroencephalogram (EEG) and later brain-computer interface (BCI) equipment with computers, mobile devices, VR, and AR. Experimenting with a wide range of VR and AR hardware and software, including used in educational, artistic, social, and therapeutic programs.

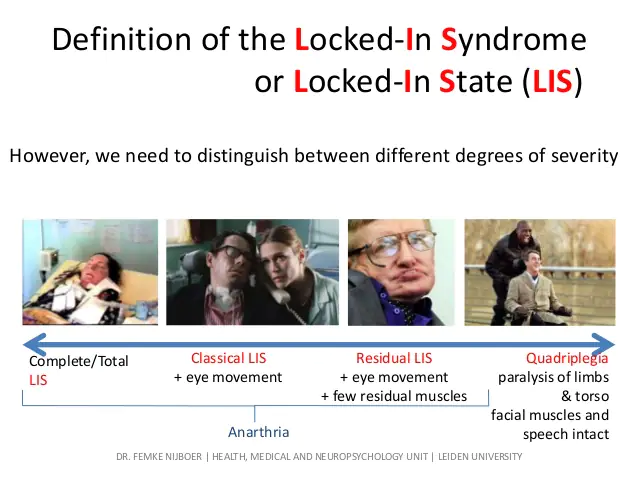

LIS & CLIS - Locked In Syndrome and Complete Locked in State

Our founder was dramatically motivated to try to find a solution for those people suffering from Locked-in Syndrome (LIS) and Complete Locked-in State (CLIS), starting with caring for a young adult in 1990 as a nurse's aide and LPN trainee, at Doxie Hatch Medical Center, and additionally for many others with various injuries and neurological disorders as a habilitation therapist at Hillcrest Care Center. Ever since he has wanted to figure out a way to use technology to help them reconnect socially, set them free of their physical prisons of the active minds trapped in their bodies, and gain back some of their lives.

The goal, and real-world metrics test, is if someone with profound disabilities, including experiencing locked-in syndrome (LIS) or complete locked-in state (CLIS), can be freed from their prison to engage socially in complex multiplayer social cooperative problem-solving role-playing games.

Program phases and updates on our live streams and Github: https://github.com/RPG-Research/

Ultimate in RPG Accessibility and Immersion

The ultimate in accessibility potential is through the Brain-Computer Interface (BCI) technologies based on Electroencephalogram (EEG) technologies, integrating with AR & VR, (and eventually back into the physical world through robotics), literally allowing a person to interface with computer systems purely with the thoughts of their brain.

Also, when linked with haptics, robotics, AR, VR, and other technologies it is the potential in ultimate immersive experiences.

We have been hard at work experimenting with EEG and BCI equipment with music and RPGs since 2004.

Since 2019 our research and development team have been working on Project Ilmatar - https://github.com/rpgresearch/erpg/wiki - an opensource, online, multiplayer, turn-based, cooperative-play, electronic, role-playing game designed from the ground up for maximal accessibility including BCI control support.

Update Fall 2020, migrated to organization Github, moving our content from the previous individual Git: https://github.com/RPG-Research

As usual, our projects are little-known, long-running, and have almost no funding. It is thanks to our wonderful volunteers worldwide that we accomplish anything. We hope some day perhaps to have more support financially, but for now we keep leading in innovations thanks to the wonderful efforts of these generous people helping a few hours every week, and we share openly with the world in the truest form of opensource, following our philosophy of "the rising tide of shared knowledge floats all boats" to help improve the human condition globally.

While the software is free, the hardware we have to purchase to realize this technology fully is expensive. We are currently utilizing OpenBCI which still costs from hundreds to even thousands of dollars per headset. The RPG Research board approved $2,500 USD to purchase the full R&D bundle from OpenBCI. We are now working from two directions. The software side and the hardware side, working toward full integration.

We also hope to loop back from the virtual world and integrate robotic devices controlled through BCI equipment to enable participants with disabilities to more fully engage in all role-playing game formats.

We stream the development meetings live each week on Saturdays from 10 am to Noon PST8PDT: https://youtube.com/rpgresearch

If you miss the live stream, Patreon supporters get access to the recorded videos at least a month before the general public as a thank you for their donation support. https://patreon.com/rpgresearch

High-level Roadmap Development Phases

Phase 0 - EEG I/O controllers in NWN on LInux

learn basics of EEG, bio-and-neuro feedback, and computer interaction, then attempt to replace regular keyboard or other I/O controls for PC-based video game (preferably on Linux due to more flexiiblity of I/O controls) and play game with only EEG and/or bio-monitoring inputs. Start with NeverWinter Nights on Linux. - Complete

Phase 1 - Game design prototype in NWN:EE on LInux with EEG/BCI for I/O

Train new development team on game dev concepts desired with prototype use of Aurora Toolset for NWN. Create new adventure module that will be used as test bed for future prototypes. Choose Shakespeare's The Tempest.. Experiment with controls using EEG and BCI equipment with the Enhanced Edition Neverwinter Nights on Linux. Complete.

Phase 2 - Game from scratch TUI version BCI I/O online turn-based mutiiplayer

Create from scratch a text-only multiplayer online turn-based cooperative RPG that can be played with only OpenBCI (or similar) equipment. - Currently nearing end of design and documentation steps, and starting actual coding steps. Will use GUI tools with GoDot engine fhe the GM tools, but play must be all TUI and able to be run with the simple inputs of OpenBCI. Use the same adventure bluebrint as Phase 1. - IN PROGRESS. Begin incorporating components from the AI RPG and AI GM (ai-rpg.com) projects as needed, especially for NPCs/Creatures, but also options for PCs, world sociopolitical, events, ripple effects from PC actions, etc.

Phase 3 - Add GUI and Add Optional [Matrix] Integration Support

Add basic graphics real-time graphics or pre-recorded action videos triggered like Dirk the Daring from Dragon's Lair - on top of existing TUI-based game.

Phase 4 - Add Natural Language (NL), Machine Learning (ML) / Artificial Intelligence (AI)/ Neural Networks (NN) Enhancements

To further improve accessibility and overall playability, increasingly increment in various tchnologies related to Natural language, Machine Learning (ML), Neural Networks (NN), Artificial Intelligence (AI) and other related technologies in different areas of the platform as appropriate and resources allow.

Phase 5 - Add xR (VR/AR/MR)

Add xR-related options (optional!) to the graphical interface experience options available. Add AR, GPS, global world location play to game. If viable include "holographic" UI options so no glasses, etc. required.

Phase 6+ Add Robotics and other Physical local realm interaction features

Integrate gyro, GPS, and other technologies to add additional physical local realm experiences. Migrate BCI tools to work in physical world through robotics and wireless triggers so can interace with combination of AR and physical devices, including manipulating dice roller devices, robotic arms to pick up and move objects, move miniature tokens around enhanced by AR overlaid animation, so LIS/CLIS can play at the same physical table or larp with other players in the same physical location, not just online.

Phase 0 (completed) 2006 - 2014 OpenEEG

NeverWinter Nights (NWN) Diamond Edition on Linux controlled by OpenEEG equipment.

Basic control of PC movement. Routing of I/O through OpenEEG 5 channel headset.

Began 2006, functional by 2010, stopped working on it by 2014.

Very rudimentary movement, and on/off menu (or other on/off assigned hotkey) mapped and working. Not sufficient for LIS/CLIS, but helpful for some disabilities. Very high latency and error rate however.

Need greater resolution of signal, frequency ranges, more CPU power. OpenEEG and civilian system power still leaving something to be desired, but getting closer. Not sufficient for AR/VR addition however.

Phase 1 (completed) 2019 - 2020

NeverWinter Nights (NWN) Enhanced Edition (NWN:EE).

Using Windows for Aurora Toolset module development.

Not using EEG or BCI equipment at this phase. Creating prototype adventure that will be used in Phase 2 onward for baseline R&D.

Development prototyping and team training / team building, ue NWN:EE Aurora Toolset to create custom adventure. Began August 2019, completed August 2020 successfully (still adding enhancements over time). Later see if newer generation OpenBCI can be used.

Phase 1b - (pending) - OpenBCI

Is it possible to configure OpenBCI to run this game at, or better than, OpenEEG did for NWN Diamon Edition in Phase 0?

It is not expected that full functionality, or multiplayer features will work. But hoping that the 16-channel higher quality setup may make solo play viable.

Phase 2 (in-progress) 2020 - Current

Scope and build from ground-up electronic role-playing game (ERPG) that can be controlled by accessibility equipment, especially newer generation EEG/BCI equipment, such as the OpenBCI hardware (among others).

OpenBCI

GoDot

Text-based UI (TUI)

Brain-computer Interface controlled text-only turn-based menu-driven.

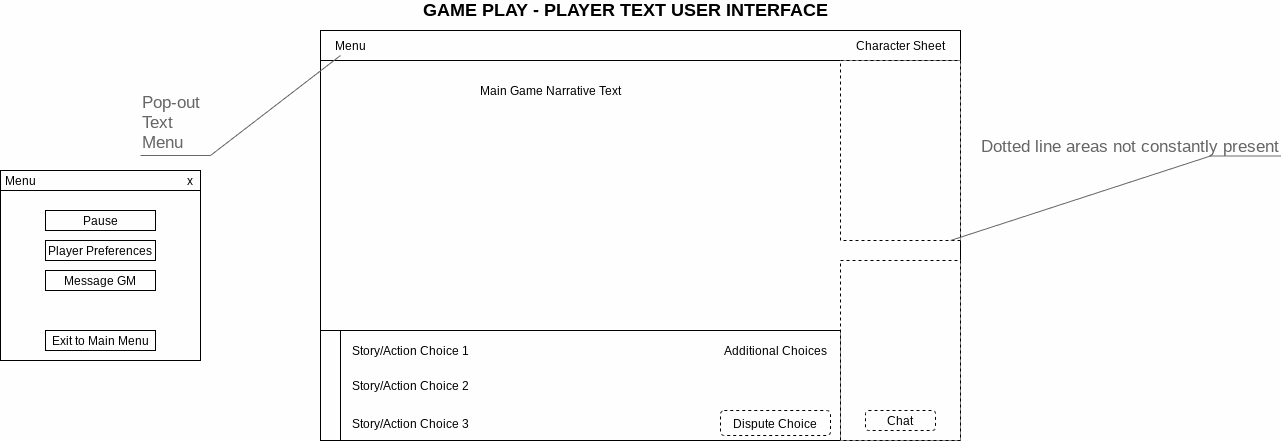

BCI RPG Game player, Player Text User Interface

Game is:

- online

- multi-player

- team-focused

- turn-based

- initially text-only

- menu-driven

- non-chat (use other chat solutions as needed) (not a MUD/MUSH/MOO) - using Matrix-synapse as underlying network communication infrastructure, especially for chat

- electronic role-playing game that can be played with many different adaptive devices but MUST be fully playable (without chat) using only the human brain of the player(s) (BCI). Ultimately must be playable by LIS/CLIS population.

- Opensource

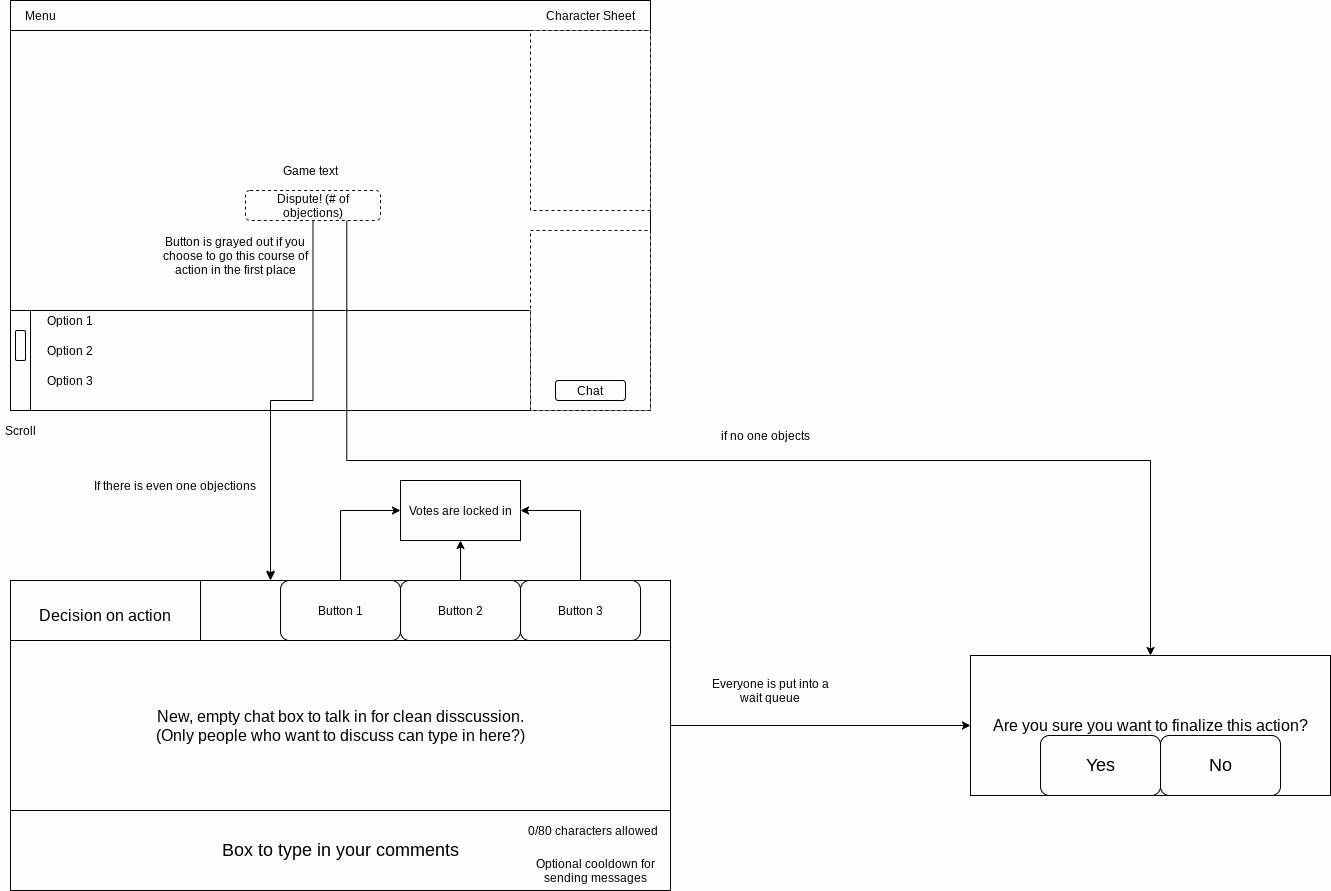

Turn-selection process in noncombat situations for online multi-player turned based life real-time brain-computer interface controlled role-playing game.

Began scoping August 2020, currently actively in progress.

Meeting weekly (broadcast live).

- Supports Game Master (GM) tools to participate in the game in DM roles with the players during the live game.

- Ability to pause game, save game, restore game.

- Supports multiple genres (player selected).

- Multiple underlying RPG systems (player selected).

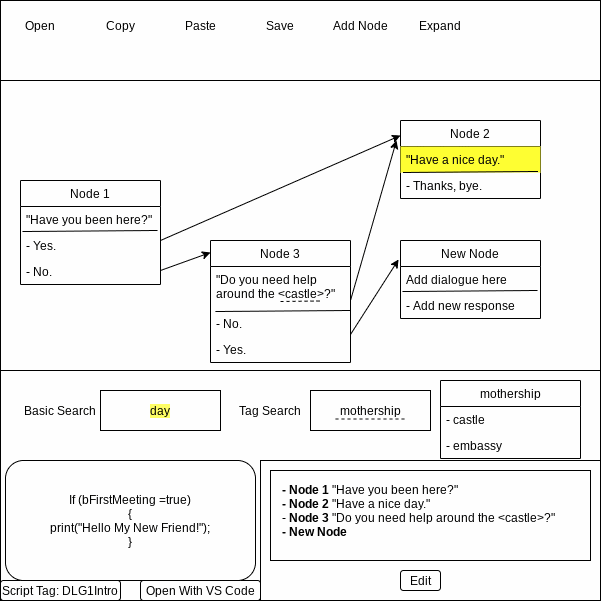

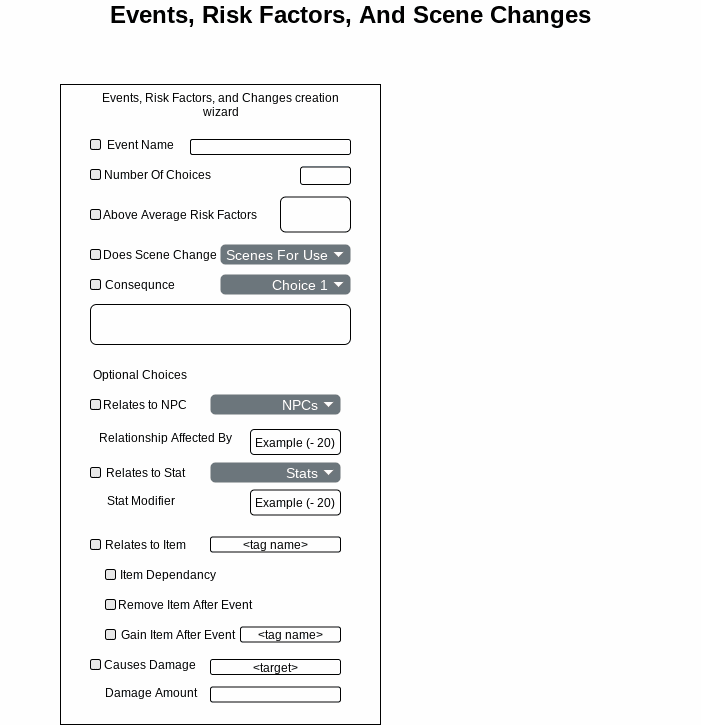

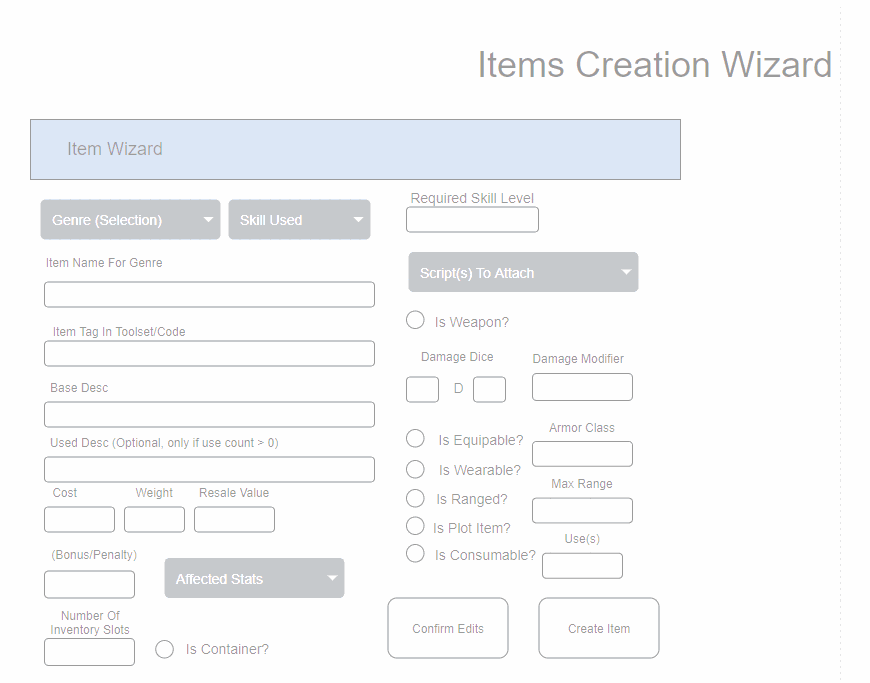

- Phase 2b Toolset provided for potential game master to create new adventures (does not need to meet BCI requirements for toolset).

- Phase 2c - Replicate the NWN Tempest adventure using our Toolset to play in the BCI RPG (text-based play)

Infrastructure and high level flow overview diagram, updated January 2024

Older Updates:

More scope details and full documents in Brain Computer Interface Role-Playing Game BCI RPG Github repository.

PC / NPC interaction dialog menues for BCI RPG text-based wireframe and example flow

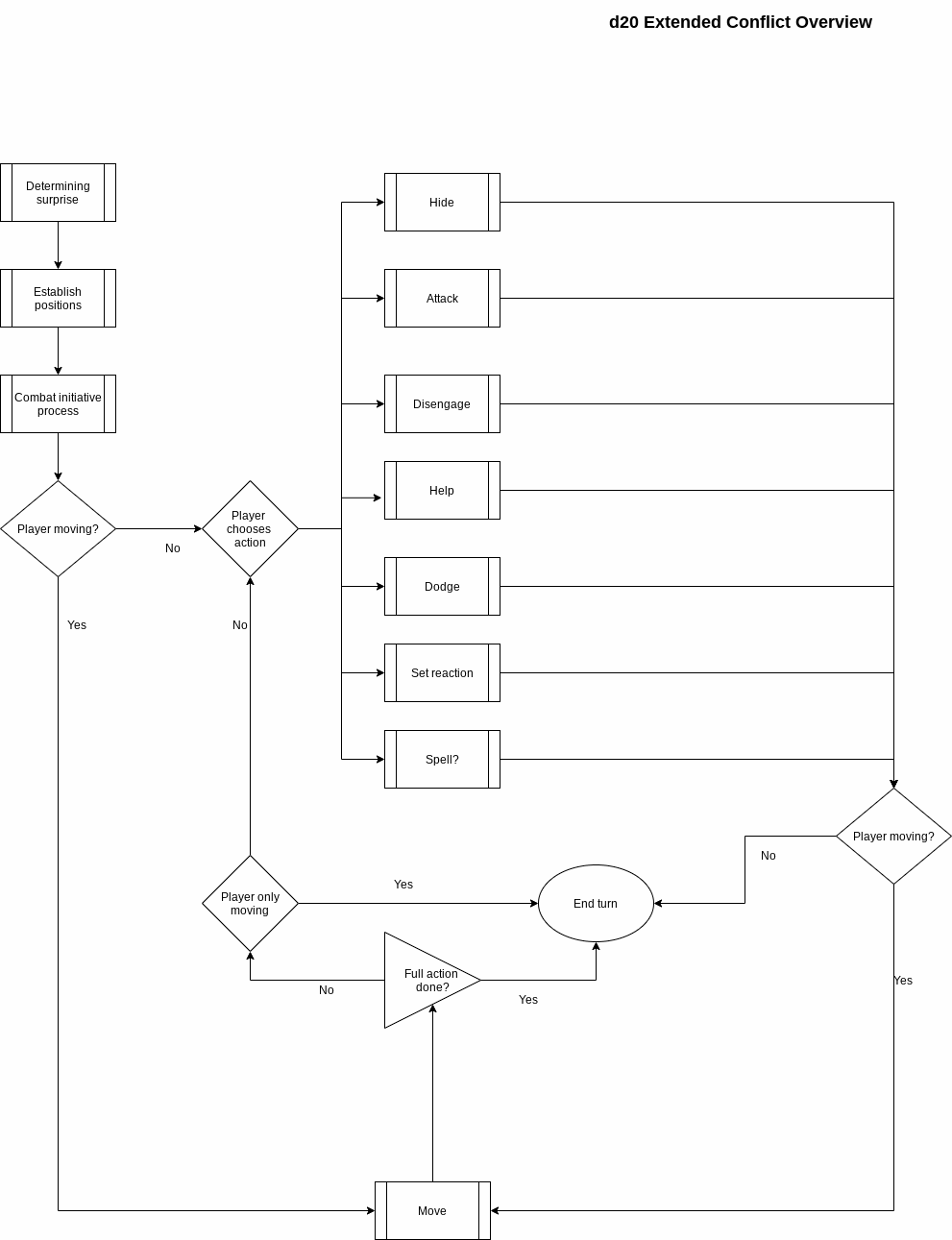

d20 RPG extended conflict overview flowchart. Included Dungeons & Dragons 5th edition (D&D 5e), and open d20 variants.

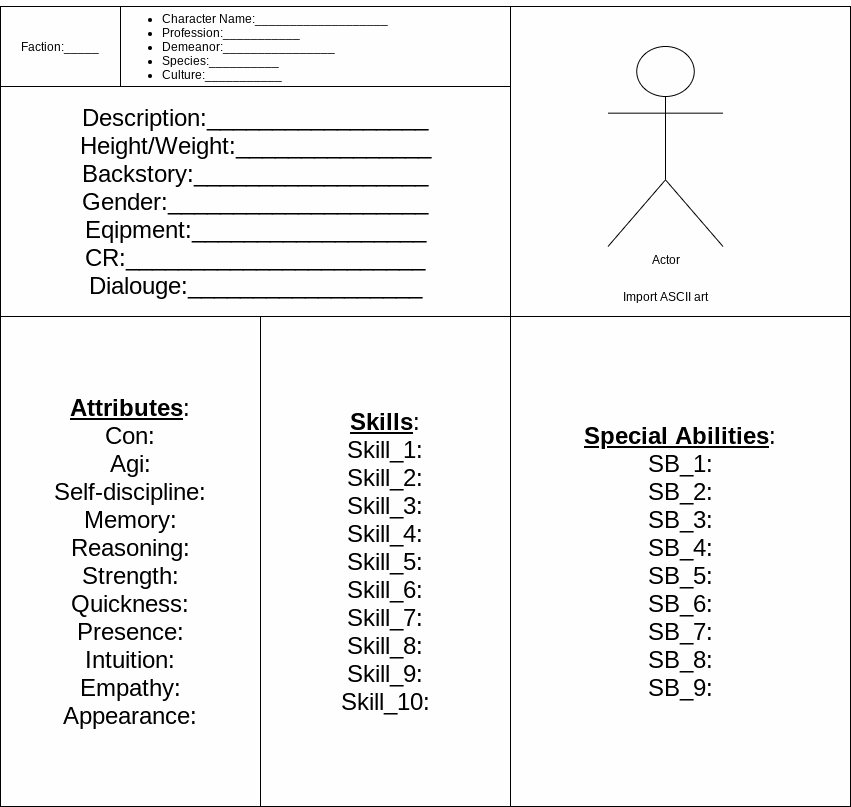

General UI for BCI RPG

Graphical User Interface (GUI) for server management of BCI RPG. The use case assumes players are in LIS CLIS, but that DM / GM / Therapist or server admin are able to use full GUI for server administration.

Phase 2b - Develop Module Creation Tools with UI

The module creation toolset is not designed for BCI or LIS/CLIS users. The assumption is that Game Master / Dungeon Master, Educator, or Therapist will use this toolset to create new custom adventures (like the Aurora Toolset is used to create adventures for NWN).

This requires full graphical tools at this point. (Would love down the road to create a BCI-controlled toolset, but that is much more advanced than our current team is ready for. Hopefully we can circle back to this down the road.

Phase 2c - Create Tempest Adventure Using New Custom-built Toolset

In Phase 2 all the basic play mechanics, and the module creation tools are built.

We already created the storyline and branching narrative in Phase 1 with Twinery and implemented in NWN:EE with Aurora Toolset.

Now we need to take the same story and recreate it using our "ModGod" toolset.

Phase 2d: Begin Linking Artificial Intelligence Tools with BCI-RPG (ai-rpg and/or ai-gm)

Once the more manual NPC, weather, etc. reactions are working smoothly, begin hooking in the AI tools from the ai-rpg and/or ai-gm projects.

Phase 3 GUI (pending)

Add graphical interface (instead of just text interface) with audio and video pre-recorded events tied into the text-based events (a la Dragon's Lair). Later add more dynamic graphics/audio/video for smoother experience.Planned to begin early hooks around late 2021.

Further enhance the AI integration.

Phase 4 AI (pending)

To further improve accessibility and overall playability, increasingly increment in various tchnologies related to Natural language, Machine Learning (ML), Neural Networks (NN), Artificial Intelligence (AI) and other related technologies in different areas of the platform as appropriate and resources allow.

Phase 5 xR (pending)

Add xR/VR integration, motion integration, while still fully supporting BCI play. Include accessibility settings for VR for people with very limited or no physical mobility of their own (various drivers from third-parties hopefully to integrate, do not want to have to develop those drivers ourselves).

Phase 6+ (pending)

Bring accessibility BCI RPG back to the tabletop.

- integrate robotics equipment controlled through the BCI controls of the game play

- enable rolling physical dice

- moving physical miniatures

- Integrate "holographic" experiences with robotics, AR, etc.

- and other features through the BCI control

But in a TRPG environment rather than online ERPG (but using the tools and code created for this now more well-rounded continually evolving ERPG as the tools to enable TRPG play).

Your donations mean that more people can have access to our free programs.

Please donate today to help us with these efforts.

Or Volunteer Today!

Integrate gyro, GPS, and other technologies to add additional physical local realm experiences. Migrate BCI tools to work in physical world through robotics and wireless triggers so can interace with combination of AR and physical devices, including manipulating dice roller devices, robotic arms to pick up and move objects, move miniature tokens around enhanced by AR overlaid animation, so LIS/CLIS can play at the same physical table or larp with other players in the same physical location, not just online.

No comments yet. Start a new discussion.